Putting Pen to Paper (Part 2)

This is part 2 of Richard Gould’s blog post about the summer project The Last Symphony.

Another requirement of the audio was that each object should have a unique sound that would playback when the item was collected. For those the items that relate to specific stories, their sounds contain musical phrases that stem directly from the musical piece associated with that story. So when you truly hear the music on the screen with the truck, you’ve already heard segments of that piece from when you initially picked up the objects.

We Have The Technology

The technical component.

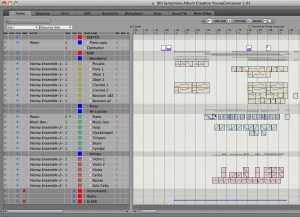

As far as how the music was scored technically, I composed the majority of the work in Digital Performer using sounds from Native Instruments and Vienna Instruments.

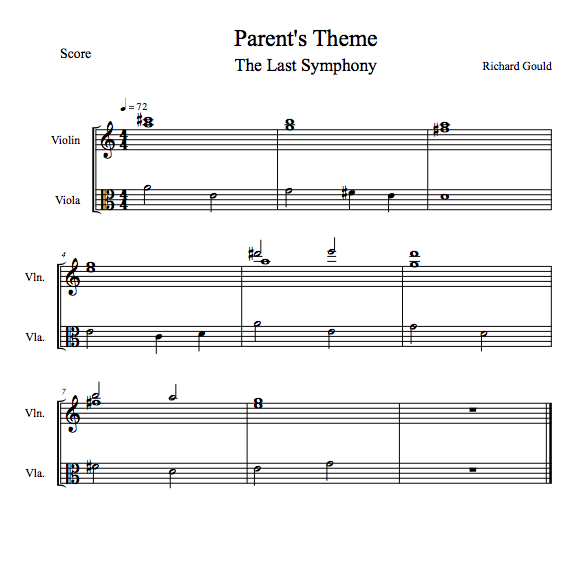

For the pieces that contain live players, parts were created using Finale 2011 notation software. Violin parts were sent to Richard Ludlow in L.A. who recorded his parts remotely and sent them back via Dropbox

The cello was recorded by myself in my apartment and was performed by Ro Rowan. As I didn’t have a professional studio to hand, I had to create a makeshift studio/fort. I used AKG C1000 & C3000 microphones for the cello.

I’m actually thrilled with how the live instrumentation came out and it certainly elevated the quality of the music. Including just a couple of real instruments can bring an otherwise fabricated score to life and to many, is then indistinguishable from the real thing.

The next process involved planning how each piece would be split into it’s respective four layers. This proved to be challenging as the entry of each layer had to be both noticeable and effective musically. For this reason, it was important for each layer to have some element in it that was playing music at all times. This was the breakdown for Robert’s childhood theme, which later became “Fun & Games” on the soundtrack:

Layer 1: Bassoon 1&2, Piano

Layer 2: Clarinet 1&2, Horns, Glockenspiel

Layer 3: Oboes 1&2, Violins, Violas, Cellos, Basses

Layer 4: Flute, Trumpets, trombones

With the layers then decided upon, I created downmixes of each layer. I then took any musical content that took place before beat one of measure one (a pick-up for example) and placed that audio content at the end of the looped section. Similarly, I then took any content after the last beat where the piece should loop (such as a reverb tail) and placed that at the beginning of the piece. This is essential to creating seamless looping points. The result is a musical file where the end sets up the beginning, and the beginning completes the end!

With the layered loops then created, I had to calculate the precise length of each loop so that our music engine (designed by GAMBIT’s Andrew Grant) could loop the mp3 files at the right moment because mp3s add empty bits to the end of a file to make it a specific bit length which leads to audible pops when looping due to a small gap of silence between the end and start of the loop. I used Pro Tools to find these values, using maximum zoom and then eyeballing it to the closest thousandth of a second.

I Have Something To Compress

Compressing the assets for implementation.

The last and most painful process was compression. At this point, all my audio assets totaled 380mb as uncompressed assets (44.1/16). I had been budgeted a 15mb maximum so I began the inevitable and arduous process of compressing each file individually. By taking an individual approach, I could minimize the degradation in quality, as some of the audio was more susceptible to the artifacts that result from compression than others. More complex and varied content (such as a snare drum) required higher bitrates than say, a solo flute (which has a comparatively simple wave form). My bitrates ranged from a minimum of 40kbps to a maximum of 80kbps. Whilst I went through this process, I made detailed notes of which files I would like to revisit if I came in above or below my budget and had to make a second pass.

Example:

Books @ 56kbps (could go lower)

Chess Set @ 56kbps (could go higher)

Sword @ 80kbps

Childhood Theme Layer 1 @ 40kbps (could go higher)

Childhood Theme Layer 2 @ 56kbps (could go lower)

High Quality mp3 (192kbps): Film Reel at 192kbps

In-Game mp3 (48kbps): Film Reel at 48kbps

High Quality mp3 (192kbps): Orchestral Tuning at 192kbps

In-Game mp3 (48kbps): Orchestral Tuning at 56kbps

When I finished this process I came in at 13.5mb. That’s about a 95% memory reduction from of the original uncompressed assets. I had hoped to improve the quality of some of the musical content, but it was (rightly) decided that to lessen the memory budget of the whole game, I should leave all assets as they were.

This process was very revealing. I discovered that the sound of uncompressed audio in the context of a web-based game was off-putting. If a game is streamed online, the likelihood is that the audio is pretty heavily compressed. Whenever I tested the game with my uncompressed assets, it didn’t seem quite right. It sounded too good… in a bad way. I would liken it to watching a movie on your iPod with a 5.1 home theater system playing back the sound. Despite the fact that the audio-quality is fantastic, it just isn’t quite right. You don’t serve a Big Mac with a garnish of caviar!

So whilst we as composers often lament the process of compression, I must admit that I believe it’s actually necessary not just because of memory budgets, but for the aesthetic that some with compression.